PointDrop: GPU Particle Sim

This was a quick project that I implemented in two evenings, inspired by this Twitter post by Frederik Vanhoutte. Very little information was provided, just that this was "The wrong way to use a SDF". My perception was that you could create this type of an effect by distributing some particles on some starting plane, and they would fall down along a vector indicating gravity. The would continue along that vector until they were within some epsilon of the surface, where they would stop.

Starting with this interpretation as a premise, I decided to experiment with atomic writes to an image buffer as a rendering method, as I had done previously in my GPU physarum simulation. Where that project operated in a plane, I was now dealing with three dimensional points - but a cheap projection is easy - just take the .xy from the three dimensional point to get a location you can map to a location in the buffer.

In fact, this project took a lot of cues from the physarum sim - since it was already implemented, I carried forwards the progressive gaussian blur that maintained state in two buffers, ping-ponging each update. In this project, it softens the image in the buffer after the atomic writes take place and diffuses the values outwards. The textures used are single channel, unsigned integer type, due to that limitation on the buffers that can be used by OpenGL to do imageAtomicAdd. Atomic writes like those used by imageAtomicAdd ensure that all writes will be tallied - the value in the buffer after writing for all points will contain the sum of the writes by all points. This necessitates the flushing of all caches, committing all writes to memory so that the GPU does not accidentally increment a cached value which does not reflect recent writes by other execution units. For this reason, atomic operations have an inherent expense associated with their use. I have found that for applications like this, they still scale to the order of tens of millions of points doing these writes per update while retaining realtime framerates.

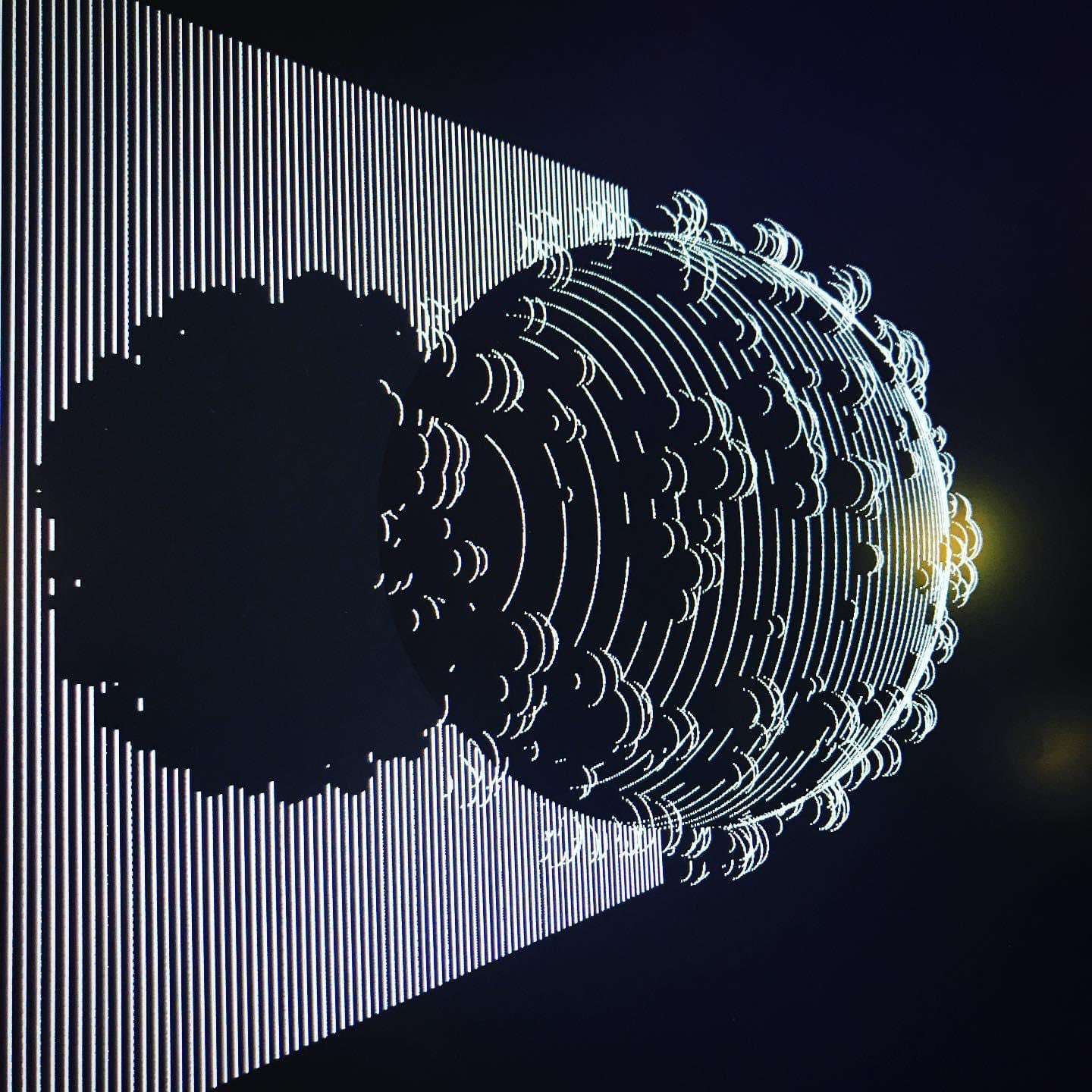

To make things more interesting, I had the thought to use the gradient of the SDF (or the inverse of same) to inform the update of the particle's position each update. To this end, I packed a couple million points into a SSBO, which held two vec3's per point. As you can see in the round part of the shape above, some artifacts were present in the fact that I had forgotten that even with std430, an SSBO has alignment requirements such that storing vec3's is a no-go - I needed to change it out for vec4's. The fourth element might be used as a mass value, atomic write amount, flag to set per-agent behavior, or something else, if I wanted to mess with this project further.

What's more, you can get the appearance of more complex movement by applying a simple 3d rotation before taking the .xy swizzle, as you can see in the video here. I experimented with a few different methods for particle updates:

- Having particles stick to surfaces, as in the reference, by having the position stop updating when they are within epsilon of a surface

- Particle acceleration along the SDF gradient, repelled by surfaces

- Particle acceleration direction set by the sign on the return value of the SDF

- Accelerating with the cross product of the SDF gradient and the current particle velocity

- Applying some damping to the particle velocity per update

- Clamping particle velocity to some range

- Different behavior when the particle left the unit cube, as to where it would spawn back in

- Different color ramps to use the single channel value in the buffer

- Changing the amount that the result of the gaussian blur decays each frame

- Adding a button event which calls a function to repopulate the SSBO with new point locations and velocities

And here's a couple more recordings of the realtime output. This was a fun little project to implement, and it's nice to have something of a smaller scope that I could call "done" after working on it a couple hours in the evenings.

I'd like to use this same method to visualize strange attractors, and maybe lidar point clouds. You could feasibly do atomic writes into three buffers to handle multiple channels of data, color point clouds for example. You don't get any depth testing with this method, and I don't think there's an easy way to implement it, but I think it's an interesting way to get a lot of points drawn in realtime. I've also spoken to a friend about using a similar method where you would use something like this for forward pathtracing - either simulating photons hitting an image sensor, or visualizing the rays from a light source by drawing some lines between intersection points with this same method of atomic writes. I think some kind of forward pathtracing/photon simulation like this would be a very interesting thing to mess with.