AirplaneMode: Simple Pathtracer with Explicit Primitives

This project started from discussions with Thomas Ludwig at Redshift, to review some fundamentals for pathtracing on explict primitives. I have continued on my own, and basically created a little batch generator of randomized pathtraced images. The name comes from the Thomas' idea that you should know the concepts well enough to implement everything with the machine on airplane mode.

It is a completely CPU-side implementation of a tile-based renderer, with PNG output. It has required some work to learn about threading, thread-safe PRNG, and maintainence of global state via atomics. In addition to this, I have written all my own templated vector utilities for 2d and 3d vectors instead of using GLM on this project.

Materials

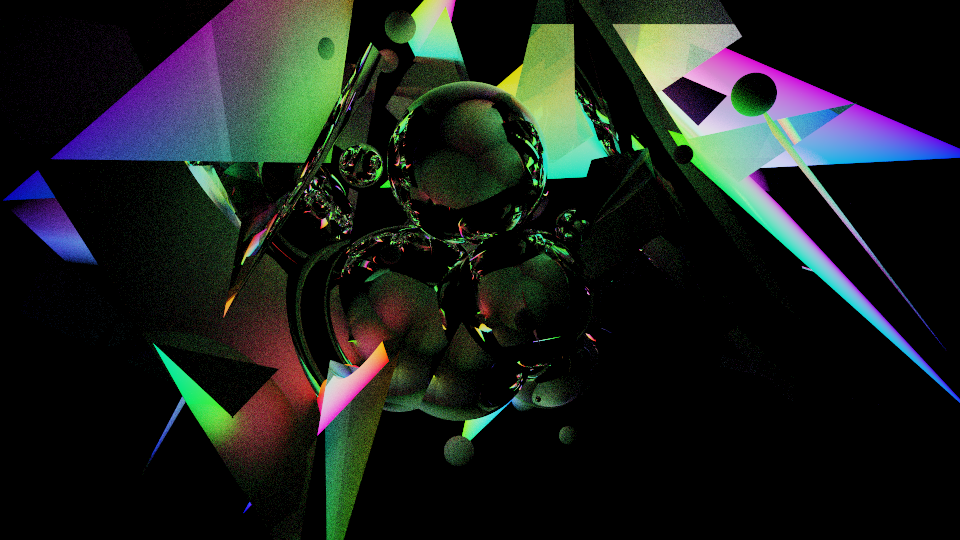

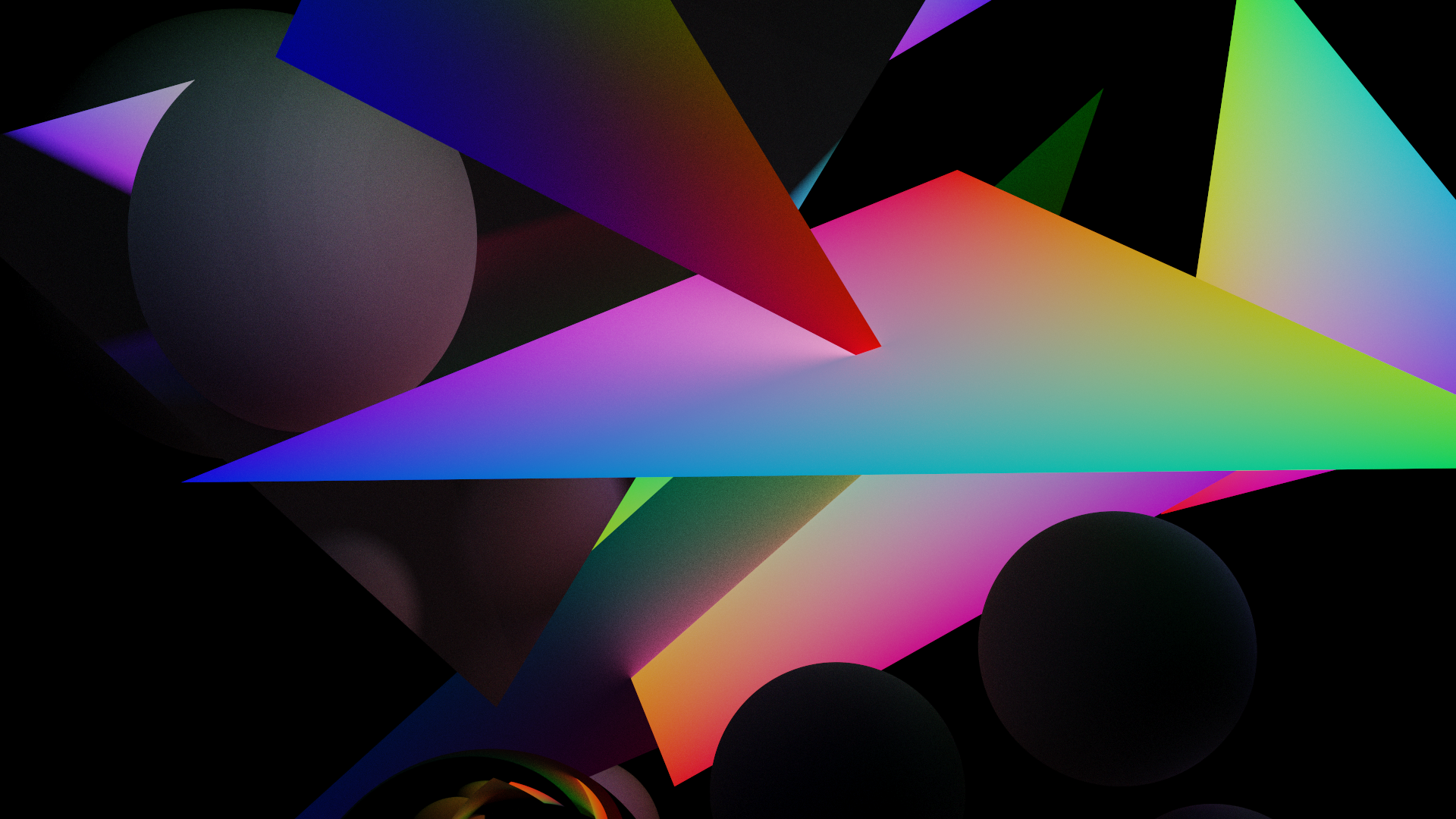

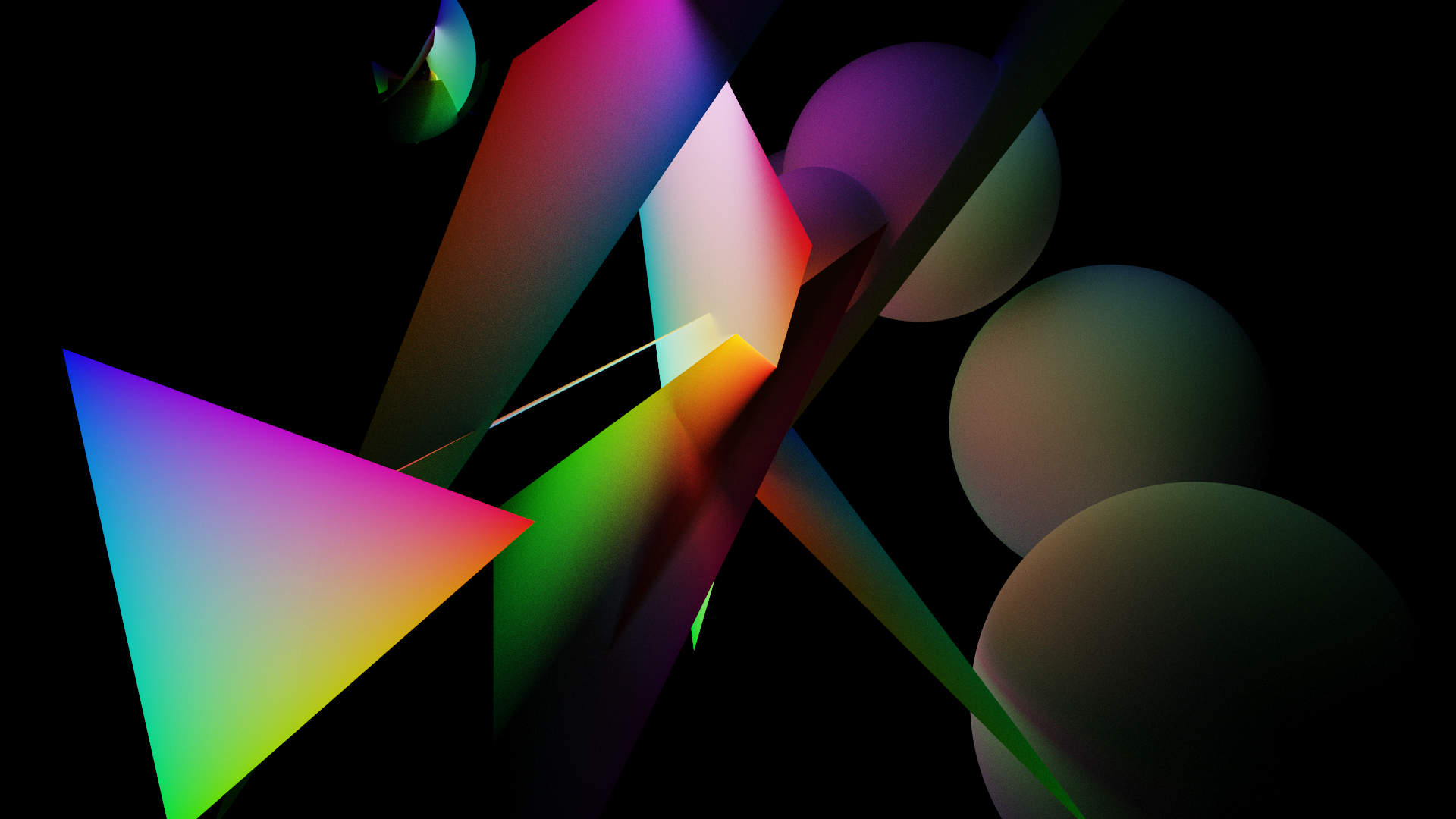

There are three types of materials included: diffuse, specular, and emissive. The selection logic when the scene is generated basically determines which material is applied to which primitives. By creating some diversity here, it made some pretty cool little outputs.

I have toyed with the idea of adding refractive materials to this program, but have not implemented it yet.

Primitives

This program makes use of explicit primitives. These are formulaic geometric representations that are solved against an incoming ray, to get the nearest intersection point and other neccesary data about that surface. In general this looks like 'plugging the ray in for the point', replacing x,y,z terms with the ray's o+t*d representation, and solving for t.

Spheres are a matter of solving the roots of a simple quadratic. When you get near and far intersection points, on positive hit, you can determine the normal by figuring out which is the closest positive distance hit, and subtracting the vec3 center point. This makes it very easy to do reflection.

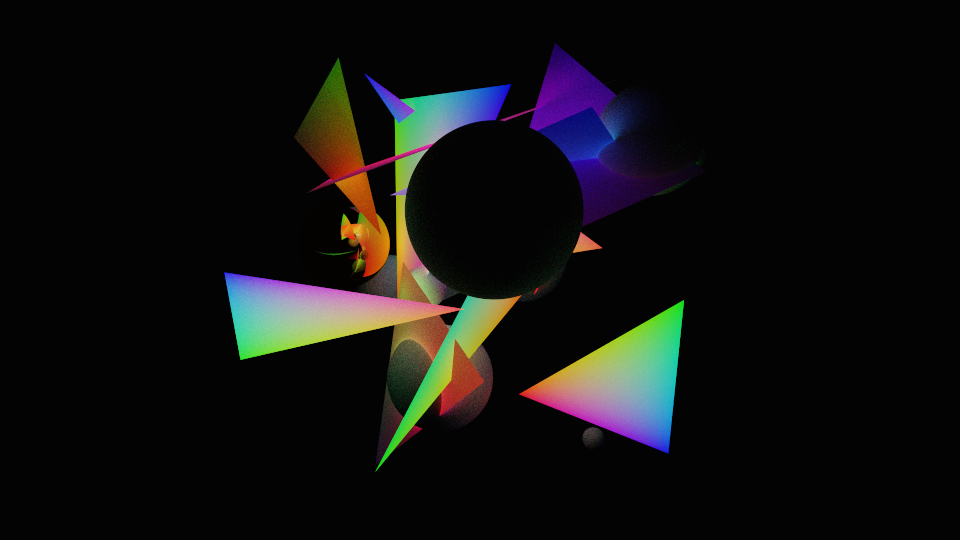

Triangles are making use of the Möller-Trumbore intersection algorithm, which will also give you the barycentric coordinate on hit. I had some fun applying an emissive material, colored by the barycentric coordinate, to a lot of the triangles. This algorithm is quick and has given good results so far.

The general structure of explicit primitives is such that you are getting analytical solutions for the ray intersectors. This usually creates much more accurate intersections, and does not leave any space for a lot of the artifacts that you can easily get with an SDF-type scene representation.

Main Pathtracing Loop & Renderer Architecture

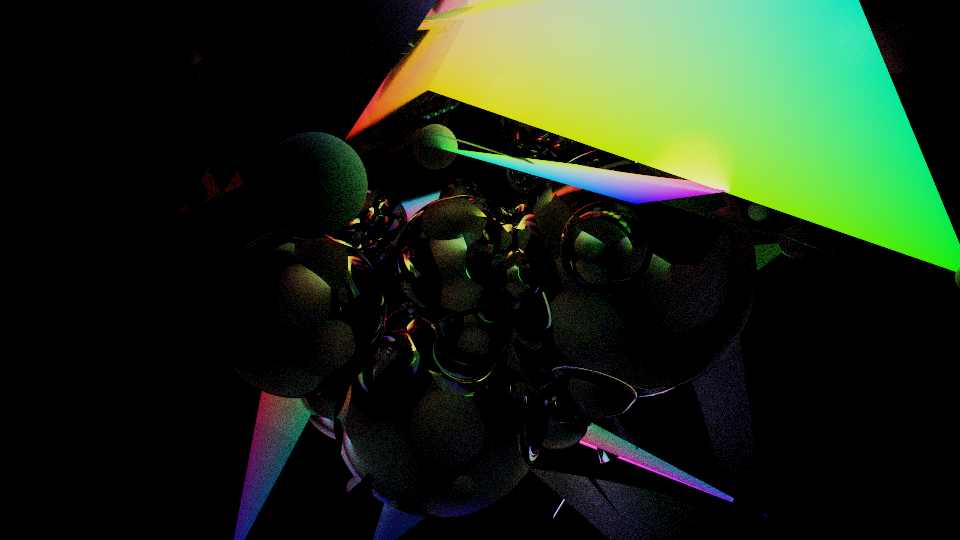

I have talked about the minimal materials I have been using before, but the basic concept is that you are accumulating light that would come in and hit the pixel. This process tries to emulate the physical reality of light, such that you consider all emission that comes from the surface under consideration, as well as light which comes from another surface and is incident on this surface. This incident light is what manifests in global illumination effects, like what you can see with the barycentric emission bleeding color onto the spheres on the right hand side in the image above. This is achieved with the use of two variables: throughput and current. Throughput is initially vec3(1.), and current vec3(0.).

The behavior with respect to these two variables on surface hit varies by material:

| Type | Throughput | Current | Ray Direction |

|---|---|---|---|

| Diffuse | Scaled by the hit surface albedo | Untouched | Random vector in the hemisphere about the hit surface normal vector |

| Specular | Scaled by the hit surface albedo | Untouched | Random vector, skewed towards the reflection of the incident vector |

| Emissive | Untouched | Incremented by throughput times the hit surface emission | Same as diffuse, in my implementation |

The core logic of the renderer is very simple. I have a stripped down version of it below. The function get_pathtrace_color_sample(x,y,id) will create a ray, send it out into the scene, and bounce it around to some specified max bounce depth, with russian roulette early termination. Upon termination, the value of current is taken as the pathtraced sample result.

std::thread threads[NUM_THREADS+1]; // create thread pool

for (int id = 0; id <= NUM_THREADS; id++){ // fork off and do work

threads[id] = (id == NUM_THREADS) ? std::thread( // reporter thread

[this, id]() {

const auto tstart = std::chrono::high_resolution_clock::now();

while(true){

// reporting loop (break on same condition as worker thread)

// issue '\r' carraige return

// report timing info & percentage completion

// wait ~0.1 seconds to avoid the overhead of a busy loop

}

}

) : std::thread(

[this, id]() { // tile based renderer worker thread

while(true){

unsigned long long index = tile_index_counter.fetch_add(1);

if(index >= total_tile_count) break; // termination condition

// solve for x and y from the atomic int holding the current work index

const int tile_x_index = index % num_tiles_x;

const int tile_y_index = (index / num_tiles_x);

const int tile_base_x = tile_x_index*TILESIZE_XY;

const int tile_base_y = tile_y_index*TILESIZE_XY;

for (int y = tile_base_y; y < tile_base_y+TILESIZE_XY; y++)

for (int x = tile_base_x; x < tile_base_x+TILESIZE_XY; x++) {

vec3 running_color = vec3(0.); // initially zero, averages sample data

for (int s = 0; s < nsamples; s++) // get sample data (n samples)

running_color += get_pathtrace_color_sample(x,y,id);

running_color /= base_type(nsamples); // sample averaging

tonemap_and_gamma(running_color); // tonemapping + gamma

write(running_color, vec2(x,y)); // write final output values

}

tile_finish_counter.fetch_add(1); // this value used for reporting only

}

}

);

}

for (int id = 0; id <= NUM_THREADS; id++)

threads[id].join(); // join all threads to main thread

// image output via stb_image_write

stbi_write_png(filename.c_str(), xdim, ydim, 4, &bytes[0], xdim * 4);

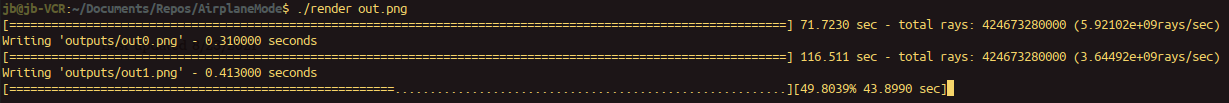

Threading was achieved with the use of lambdas, as you can see above, which create one worker thread per thread on the machine. The reporter thread, reads the global atomic int index, and will indicate how many tiles are remaining. Using the carraige return, this gives a sense of the amount of time remaining on long jobs on a single line, where otherwise it would spew text, or else be a headless operation until an image was produced. For my use case this kind of thing made a lot of sense.

Batch Mode Generation

I let this program run overnight on my laptop a number of times. When you come back, you can go through the folder of images and see what you got. Both appearance and execution time depend a lot on the settings on the scene population function and the renderer config. The largest factors are things like resolution, sample and primitive counts.

This was a simple matter of clearing the old image, scene list, and camera parameters between images. Each run, these values were randomly generated with distributions from std::random.

Future Directions

I think something like this would be an interesting installation, just displaying an image each time it is generated. I like the idea of having hardware dedicated to rendering these randomly generated scene setups. I would like to investigate more materials, like more advanced BRDFs than my trivial models, and some refractive behavior. I would also like to look at more primitives, as well as BVH logic and OBJ model loading. A GPU implementation of this type of pathtracer might be worth pursuing, as well.